Why Include Single Case Design Studies in the Review?

Single case research design is an experimental methodology (Shadish, Hedges, Horner, & Odom, 2015). It tests the causal relationship between an independent variable (e.g., focused intervention practices) and a dependent variable (e.g., outcomes for children and youth with autism). Like experimental group design, the methodology is arranged to rule out threats to internal validity (Campbell & Stanley, 1963). High quality single case design methodology includes detail descriptions of participants, repeated and reliable measurement of the dependent variables, precise specification of the independent variable, and at least three demonstrations of the functional relationship between the independent and dependent variable (i.e., when an intervention is implemented, a change occurs in the child outcome) (Horner et al., 2005). Historically, data analysis has been through visual inspection of graphed data by trained professionals (Kazdin, 2011). Statistical analyses have now been developed to aid in the analysis (Kratochwill & Levin, 2014). With single case design, external validity is built primarily through direct and systematic replications of intervention effect across multiple studies by different researchers and/or research groups. A detailed description of logic and types of single case designs may be found in Horner and Odom (2014).

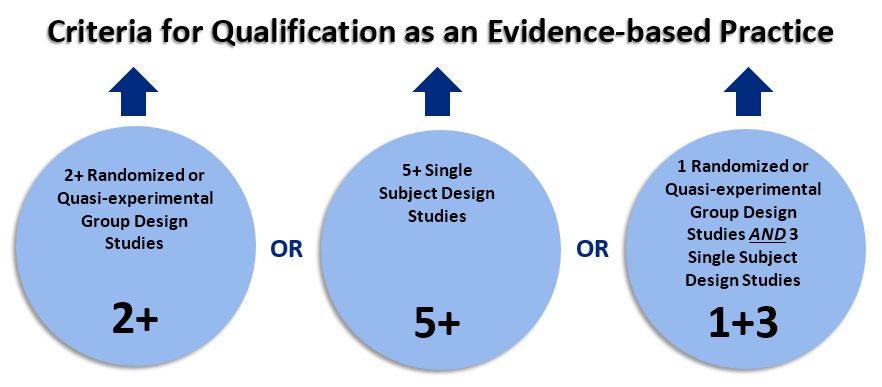

For NCAEP, we have made the decision to include single case design research as evidence for the efficacy of focused intervention practices. This is based on the rationale that the methodology is experimental in nature, and with sufficient replication by different research groups, single case design studies can provide evidence that is as substantial as experimental group designs. The NCAEP evidence-based criteria for a focused intervention practices having only single case design evidence is that there has to be:

- At least five single case design studies that reviewed verified as high quality;

- The studies have to have been conducted by at least three different research groups;

- There has to be a cumulative total of at least 20 participants with ASD across studies.

We realize that other systematic review projects have not included single case design as evidence for efficacy. As stated in our previous review of the focused intervention literature (Wong et al., 2014), “By excluding SCD studies, such reviews a) omit a vital experimental research methodology now being recognized as a valid scientific approach (Kratochwill et al., 2013) and b) eliminate the major body of research literature on interventions for children and youth with ASD.”

References

Campbell, D. T.,& Stanley, J. C. (1963). Experimental and quasi-exper- imental designs for research. Chicago: Rand McNally.

Chambless, D. L., Sanderson, W . C., Shoham, V , Bennett Johnson, S., Pope, K. S., Crits-Christoph, P., Baker, M., Johnson, B., Woody, S. R., Sue, S., Beutler, L., Williams, D. A., & McCurry, S. (1996). An update on empirically validated therapies. Clinical Psychologist, 49, 5-18.

Council for Exceptional Children. (2014). Council for Exceptional Children Standards for Evidence-Based Practices in Special Education. Arlington, VA: Author

Horner, R., Carr, E., Halle, J., McGee, G., Odom, S., & Wolery, M. (2005). The use of single subject research to identify evidence-based practice in Special Education. Exceptional Children, 71, 165-180.

Horner, R. H., & Odom, S. L. (2014). Constructing single-case research designs: Logic and options. In T. Kratchowill (Ed.), Single-case intervention research: Methodological and data-analysis advances (pp. 27-52). Washington D. C.: American Psychological Association.

Kazdin, A. E. (2011). Single-case research designs, Second Edition. New York, NY: Oxford University Press.

Kratochwill, T. R., Hitchcock, J., Horner, R. H., Levin, J. R., Odom, S. L., Rindscopf, D. M., & Shadish, W. R. (2010). Single case design technical document, Version 1.0. Washington, D. C.: Institute of Education Science. Retrieved from https://ies.ed.gov/ncee/wwc/Docs/ReferenceResources/wwc_scd.pdf

Kratochwill, T. R., Hitchcock, J. H., Horner, R. H., Levin, J. R., Odom, S. L., Rindskopf, D. M., & Shadish, W. R. (2013). Single-case intervention research design standards. Remedial and Special Education, 34, 26–38.

Kratochwill, T., & Levin, J. (Ed.). (2014). Single-case intervention research: Methodological and data-analysis advances. Washington D. C.: American Psychological Association.

Kratochwill, T. R., & Stoiber, K.C. (2002). Evidence-based interventions in school psychology: Conceptual foundations of the Procedural and Coding Manual of Division 16 and the Society for the Study of School Psychology Task Force. School Psychology Quarterly, 17, 341–389.

Shadish, R. S., Hedges, L. V., Horner, R. H., & Odom, S. L. (2015). The role of between-case effect size in conducting, interpreting, and summarizing single-case research. Paper commissioned by the Institute of Education Science. Retrieved from https://ies.ed.gov/pubsearch/pubsinfo.asp?pubid=NCSER2015002

Wong, C., Odom, S. L., Hume, K. Cox, A. W., Fettig, A., Kucharczyk, S., … Schultz, T. R. (2014). Evidence-based practices for children, youth, and young adults with Autism Spectrum Disorder. Chapel Hill: The University of North Carolina, Frank Porter Graham Child Development Institute, Autism Evidence-Based Practice Review Group. Retrieved from https://autismpdc.fpg.unc.edu/sites/autismpdc.fpg.unc.edu/files/imce/doc...